Garbage in, garbage out

From data as asset to data as liability.

How likely? How soon? What impact?

"Data is the new oil." Breathlessly repeated at conferences and in classrooms the world over, this cliché has come to represent the worst of big data's hype. The phrase has also unintentionally served as a harbinger of data's risks and harms, which are growing at an alarming and accelerating pace. Already, they threaten to undermine the legitimacy and efficacy of urban tech.

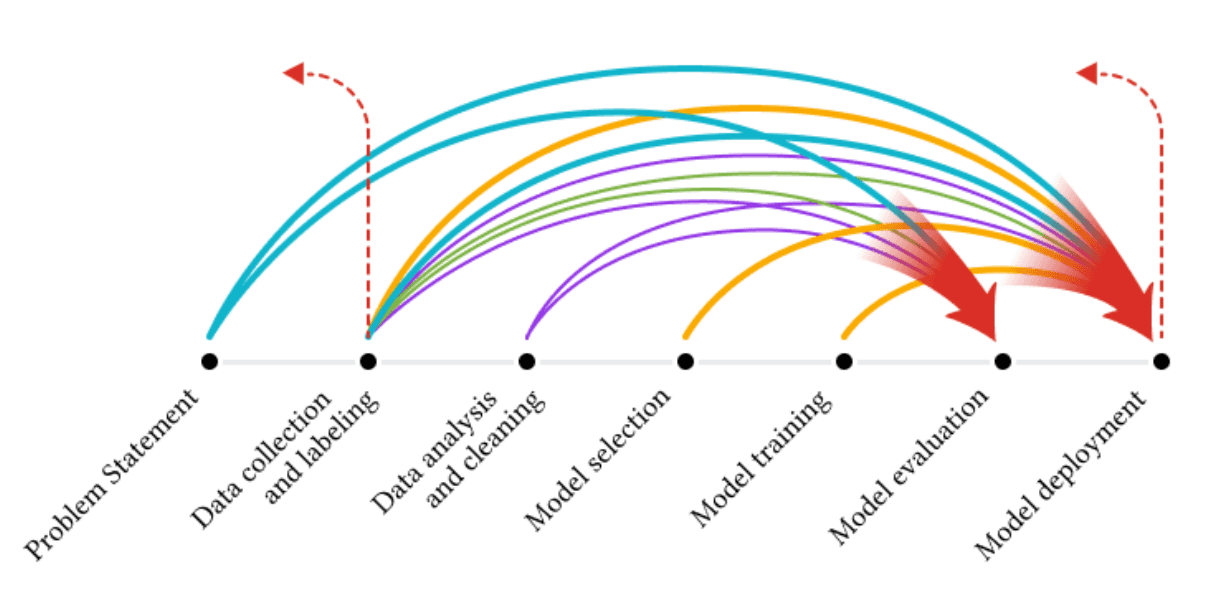

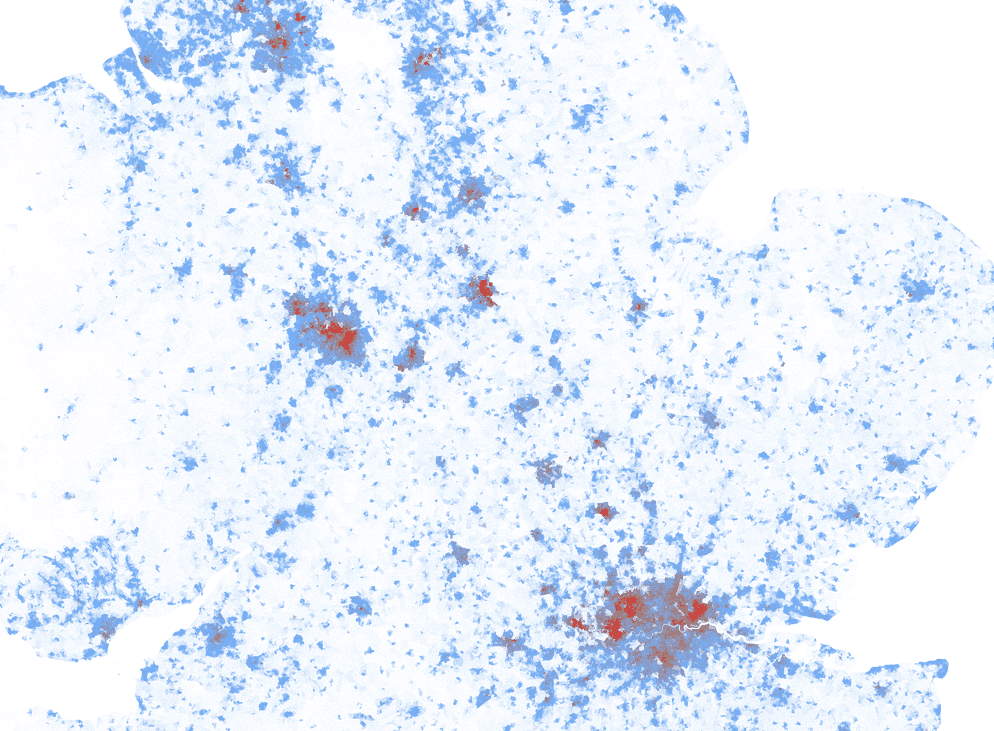

The warning signs are everywhere. Sensed data may not be reliable—authentication techniques employing blockchain may be one solution, but the risks of faked data leaking into mission-critical applications is growing fast. And this won't be our first encounter with bad data—racial bias is now widely understood to be endemic in large data sets, even by those who've created and profit from them. Still, many issues go unaddressed because data work is undervalued by AI researchers and practitioners, who would rather work on algorithms and analytics. And important tools like synthetic populations, which could help address many data risks, are often improperly used.

All of this points towards a shaky future foundation for urban tech. Will our data practices prove sufficient to stand close scrutiny, or the extreme pressures of unanticipated stress?

Signals

Signals are evidence of possible futures found in the world today—technologies, products, services, and behaviors that we expect are already here but could become more widespread tomorrow.

..png)