Bias controls

From awareness to mitigation.

How likely? How soon? What impact?

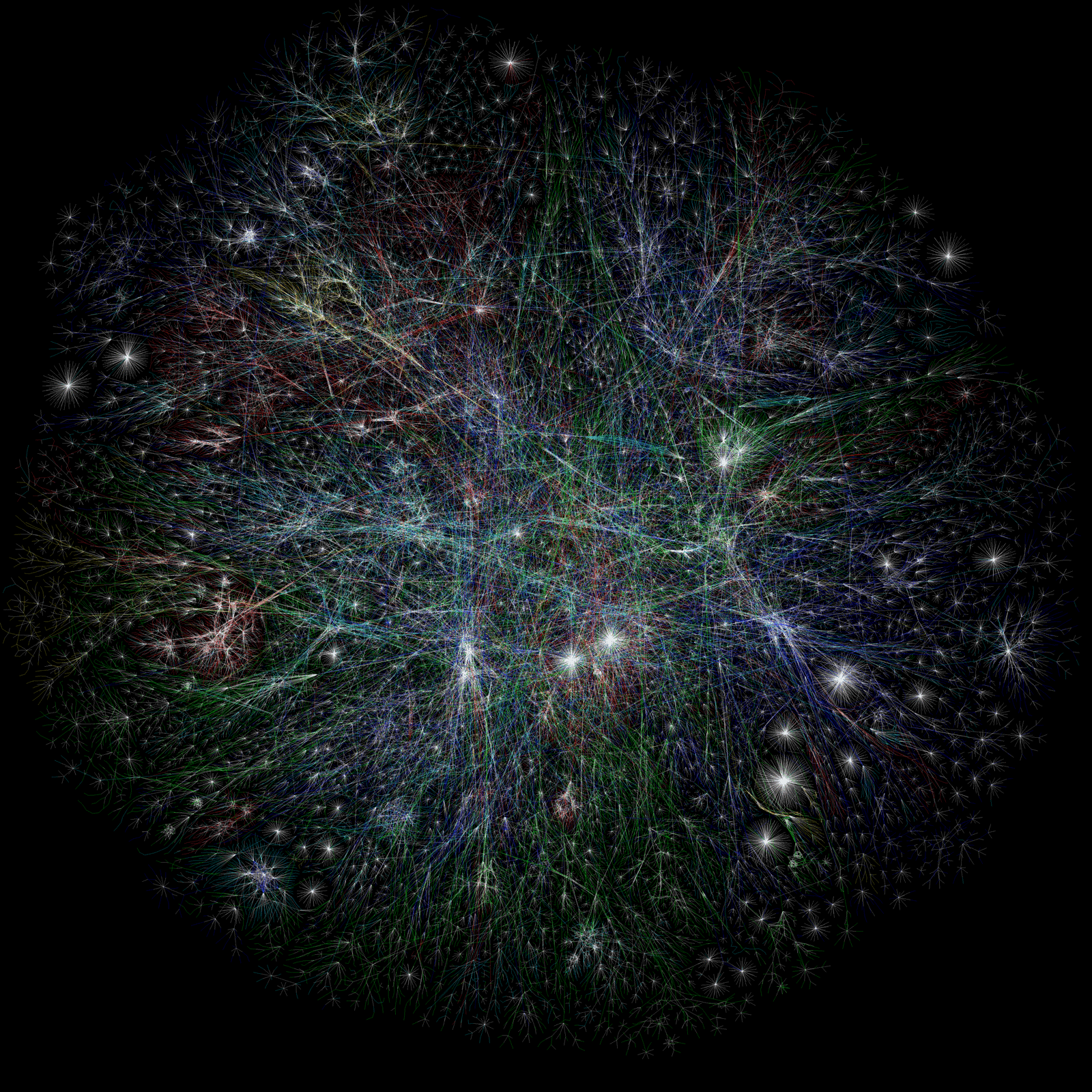

The automation of government decision-making is an old idea that has long provoked both fascination and fear. Computer analysis can be a powerful tool against incompetence and corruption. Yet we fear the seemingly arbitrary recommendations of software based solely on data and computer calculations—however rational they may be.

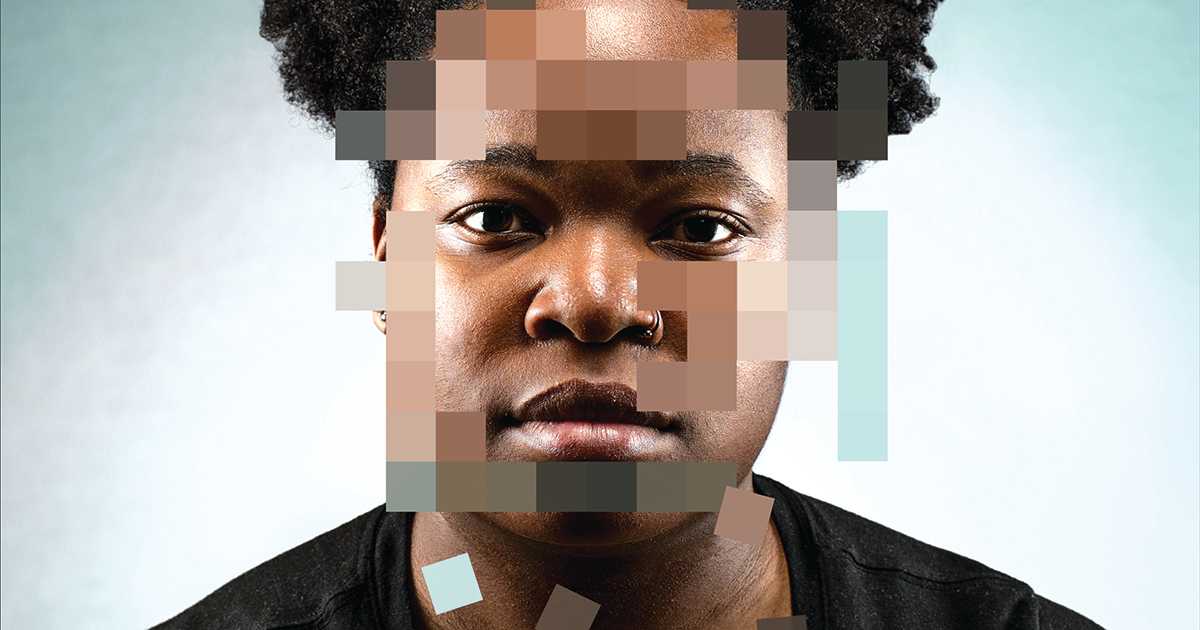

In recent years, rapid advances in decision-support systems have moved the bar rapidly—both in government tools as well as private systems that impact the body public. But we have overcorrected, and the harms are becoming clear. A new push, for controls on the controls, is gaining steam.

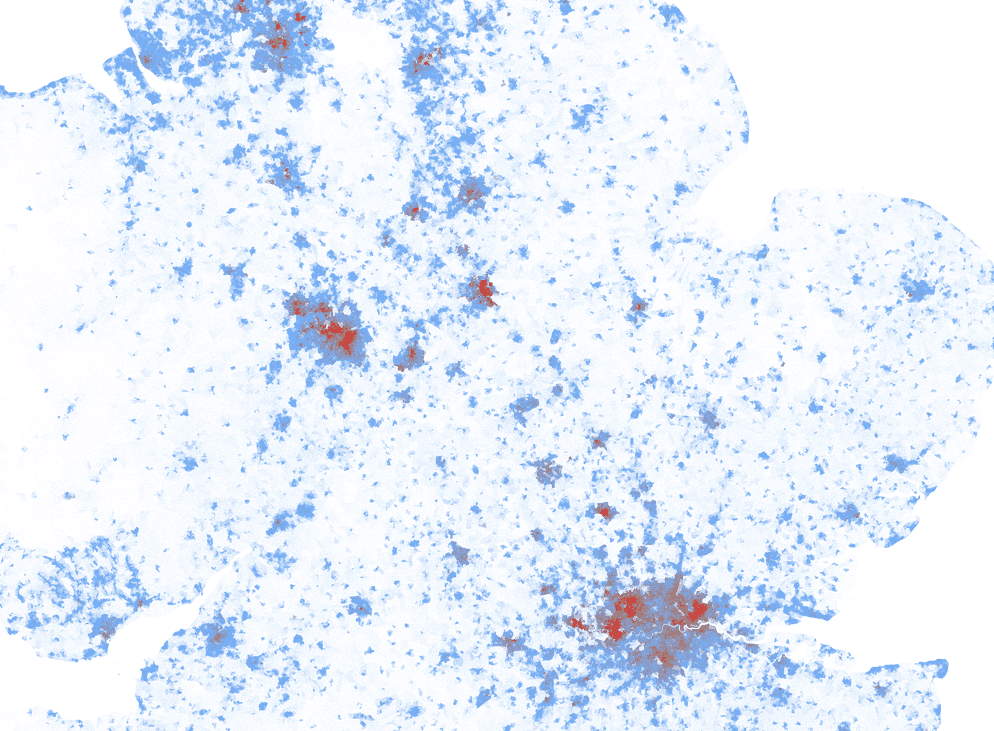

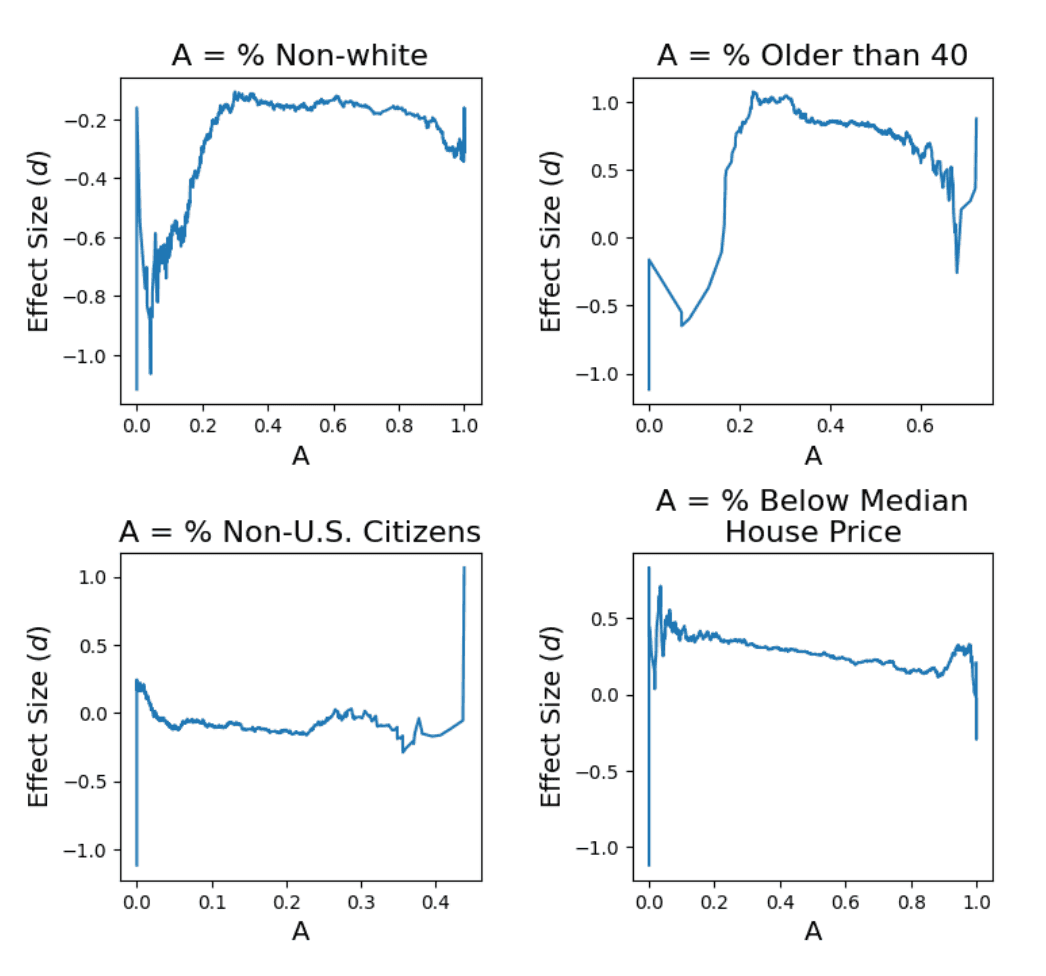

The problem starts with data. It is now widely accepted that most urban data, not just some, is biased. Collection and curation need to be rethought, with strong oversight and controls put in place to monitor and mitigate bias in data over time. One possibility is bots that look for bias in real time, making sure that we aren't simply projecting old distortions onto new data. Finally, the new data landscape will require a deep reexamination of how we make decisions with computers, and how these tools activate implicit biases in individuals and in organizations.

Signals

Signals are evidence of possible futures found in the world today—technologies, products, services, and behaviors that we expect are already here but could become more widespread tomorrow.

..png)